Interview with Yasin Yildirim, AI/ML Engineering Lead at BlueCloud

TL;DR

BlueCloud’s AI/ML Accelerator helps enterprises deploy trusted AI on Snowflake in days instead of months, reducing complexity, cost, and risk while enabling scalable, governed AI applications.

Enterprise AI adoption on Snowflake is accelerating, but most organizations are still stuck at the starting line.

They have the data, they have the platform, and they have the ambition. What they are missing is the connective tissue: the trusted, governed, production-ready engineering foundations that turn Snowflake Cortex from a compelling capability into a running business application.

We sat down with Yasin Yildirim, BlueCloud's AI/ML Engineering Lead and the architect of the AI/ML accelerator suite, to understand the real challenges enterprises are facing, how the AI/ML accelerator addresses them at a structural level, as well as why Snowflake Cortex is at the heart of every decision the team made.

Why Enterprise AI Projects Stall

Before we get into what the accelerator does, it is worth understanding the real-world problems it was built to solve. Despite enormous investment in data platforms like Snowflake, most enterprise AI projects take far longer than expected, cost far more than budgeted, and too often never reach production at all.

The Cold Start Problem

Every new AI project begins from zero. For organisations without a dedicated AI platform team, which is most of them, this cold start can consume weeks or even months of senior engineering time. By the time a working foundation is in place, business stakeholders have already lost confidence, budgets are under pressure, and the competitive window has narrowed.

The Hallucination and Trust Problem

Deploying a large language model in an enterprise context is not the same as using a consumer chatbot. A hallucinated answer in an internal knowledge assistant can result in a compliance violation, a misinformed commercial decision, or an erosion of user trust. Building the verification of pipelines, guardrails, and evaluation frameworks needed to make LLM outputs genuinely trustworthy is a sophisticated engineering challenge. Most teams underestimate it badly and pay for it later.

The Fragmentation and Re-invention Problem

Even in organizations that have successfully built one AI application, the next project largely starts from scratch again. There is no standardized architecture. Session management is handled differently. The result is a fragmented portfolio of custom applications that are expensive to maintain, difficult to audit, and impossible to scale without proportional headcount growth. The institutional knowledge lives in individuals rather than in reusable, governed systems.

The Specialization Bottleneck

Reliable enterprise AI requires a rare combination of skills: deep knowledge of large language models, expertise in data platform engineering, and the ability to build production-grade software with proper security and governance. Organizations that depend on assembling that skill set for every project are creating a structural bottleneck that caps their ability to scale AI adoption.

The Snowflake Underutilization Problem

Snowflake Cortex provides exceptional managed AI capabilities, all within the security boundary of a customer's existing Snowflake environment. But those capabilities are only as valuable as the applications built on top of them. Without standardized engineering patterns for orchestration, verification, and deployment, many organizations end up using only a fraction of what Cortex can do. The potential is there; the framework to realise it is not.

From Cold Start to Production: Inside BlueCloud's AI/ML Accelerator with Yasin Yildirim

Q: Let's start from the top. What exactly is the BlueCloud AI/ML Accelerator?

Yasin: The AI/ML Accelerator is a suite of modular, production-ready data applications built on a standardized Hexagonal Architecture that functions like plug-and-play microservices for enterprise AI. By utilizing a common architectural template for domain modeling, business logic, and external adapters, each accelerator delivers a specific, self-contained AI capability, such as trusted RAG or automated data documentation that can be deployed independently or combined seamlessly based on specific business requirements.

Q: And what problem does it solve for customers at a fundamental level?

Yasin: It completely solves the "cold start" and standardization problems in enterprise AI adoption by offering out-of-the-box, trusted templates that turn weeks of custom engineering into days of simple configuration. Rather than reinventing the wheel for every use case, data teams can immediately deploy and customize governable, production-ready AI tools that seamlessly integrate with their existing Snowflake environments.

Q: How is it different from standard ML pipelines on Snowflake?

Yasin: Unlike standard ML pipelines that focus narrowly on backend model training, feature engineering, and predictive scoring for data scientists, these accelerators are end-to-end intelligent applications designed for immediate business impact. They package advanced LLM orchestration, verification logic, and user interfaces into modular microservices.

Architecture and the MLOps Lifecycle of AI/ML Accelerator

Q: What stages of the MLOps lifecycle does the accelerator cover?

Yasin: The accelerator suite abstracts and covers the entirety of the applied AI lifecycle, from data ingestion and prompt orchestration to testing, validation, and user-facing deployment without the heavy infrastructure burden of traditional MLOps. Because they are designed as standalone microservices with built-in evaluation and guardrails, they bypass complex model training pipelines to focus directly on application assembly, governed execution, and observability.

Q: You talk about four lifecycle buckets. Can you walk us through those?

Yasin: In the context of applied AI and these accelerators, the lifecycle translates to four core buckets:

- Discover and Understand — analyzing and preparing the underlying data or schemas.

- Build and Configure — using the templates to assemble logic and prompts.

- Verify and Validate — running automated checks, hallucination mitigation, and golden tests.

- Deploy and Operate — serving the application as Streamlit, native app, and so on, and monitoring its real-world performance.

Q: How does it work technically under the hood?

Yasin: Every accelerator is built upon a strict Hexagonal Architecture, sometimes called Ports and Adapters that completely decouples the user interface, business orchestration, and external integrations. User inputs flow from a presentation layer into centralized services that orchestrate prompts and pipeline logic, which in turn communicate with Snowflake's AI and data services through modular, easily swappable adapter classes.

Built to Work Seamlessly with Snowflake Cortex and Snowpark

Q: How does this accelerator align with Snowflake Cortex, Snowpark ML, and Semantic Layer initiatives?

Yasin: The entire suite is natively aligned with Snowflake's product roadmap. Snowflake Cortex is the foundational engine powering everything, handling large language model completions, vector embeddings, hybrid search, and document parsing, while Snowpark underpins robust, secure execution. All AI processing stays within the customer's existing Snowflake environment by design, maximizing their platform investment without any data leaving their security boundary. Most strategically, the suite includes specific tooling designed to instantly generate and validate Semantic Views, actively accelerating adoption of Snowflake's Analyst and Semantic Layer features.

Q: Does it use Snowpark ML or other frameworks?

Yasin: The accelerators rely heavily on Snowpark Python for secure session management and native SQL execution, combined with Streamlit for the frontend experience. While they don't depend on heavy traditional ML frameworks like PyTorch or Snowpark ML's model training APIs, they leverage standard Python libraries to orchestrate Cortex's managed AI services effectively.

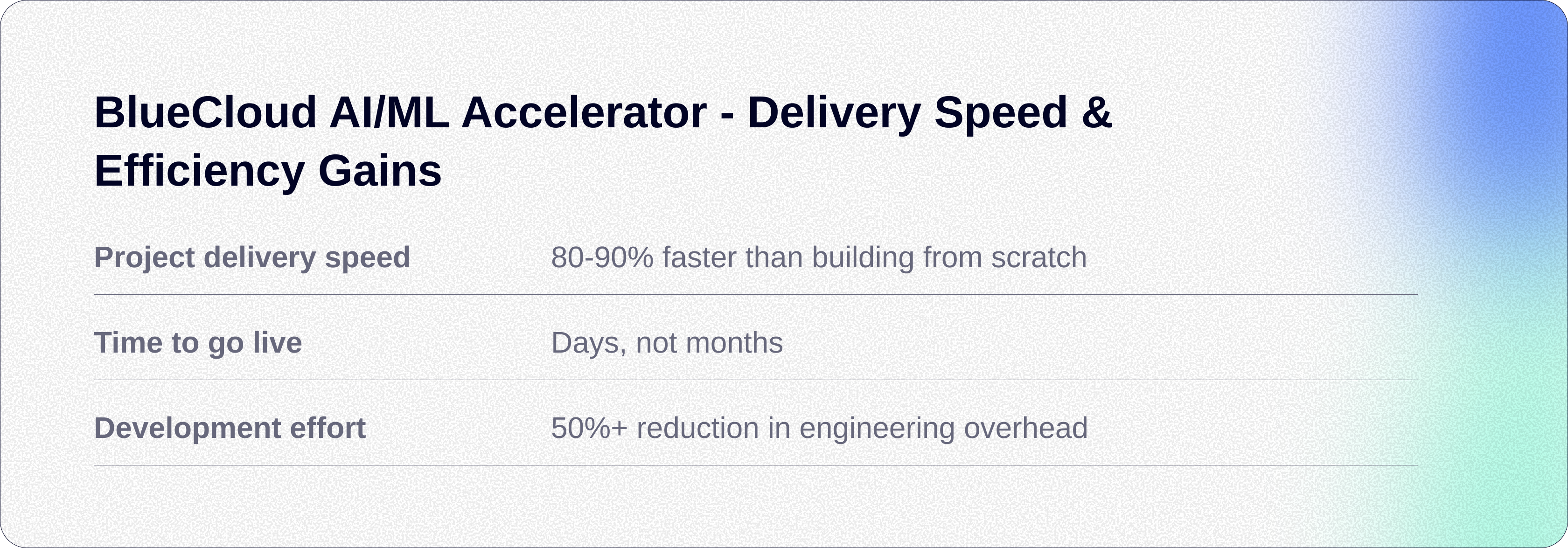

Delivery Speed and Efficiency Gains of AI/ML Accelerator

Q: How much time does the accelerator actually save in delivery?

Yasin: By eliminating the need to design architectures from scratch, write boilerplate integration code, or manually build complex guardrail systems, the accelerators reduce typical project delivery timelines by 80% to 90%. Use cases that traditionally require weeks or months of dedicated engineering can be configured, tested, and pushed to production in a matter of days.

Q: Where do the biggest efficiency gains come from specifically?

Yasin: The massive time savings stem directly from the reusable hexagonal architecture template, which standardizes error handling, session management, and UI scaffolding alongside pre-built, complex logic like self-correcting pipelines and validation loops. Engineers simply fork the base template, swap out specific prompts or domain models, and immediately focus on the unique business logic rather than infrastructural plumbing. The template is designed to work with Cortex Code, making it easy to extend, modify, maintain with up-to-date services, and debug when needed.

Business Outcomes and Customer Value of AI/ML Accelerator

Q: What business outcomes does the accelerator enable at a high level?

Yasin: The accelerator drives immediate ROI by drastically accelerating the deployment of trusted AI capabilities, democratizing application development so even non-AI/ML people can ship products previously requiring specialized AI teams and strictly enforcing governance standards. Ultimately, it allows businesses to confidently leverage Generative AI on their proprietary data without risking security, accuracy, or unbounded development costs.

Q: What types of ML projects can this accelerator support?

Yasin: Because of its flexible plug-and-play design, the template can support virtually any applied generative AI project, ranging from knowledge-base chatbots and intelligent search engines to automated data analysts, semantic modeling pipelines, and text summarization tools. Any scenario that requires securely chaining user intent, business logic, and language models against enterprise data is a perfect fit.

Ready to Ship Trusted AI on Snowflake? BlueCloud's AI/ML Accelerator Gets You There

The real measure of any AI platform is not what it can do in a demo. It is how quickly and reliably it delivers value in production. That is precisely where BlueCloud and Snowflake are strongest together.

These AI/ML accelerators provide the tangible, deployable pathways that move customers from experimenting with Cortex to consuming it at scale, closing the engineering and trust gaps that too often keep promising AI investments stuck at the proof-of-concept stage.

By solving the hard problems, hallucination detection, semantic modelling, governed execution, BlueCloud acts as an execution catalyst, turning Snowflake's AI capabilities into outcomes that enterprise clients can see, measure, and build on.

If you are looking to accelerate your Snowflake AI journey and get to production faster, BlueCloud's AI/ML Accelerators are built exactly for that. Explore our AI and Machine Learning services page to learn how we can help you move from experimentation to impact, in days, not months.

Stay tuned for our upcoming eBook - Yasin’s practitioner deep dive into the BlueCloud accelerator templates powering real-world deployments, from self-correcting SQL pipelines to hallucination-mitigating RAG applications and beyond.